PixelFlow allows you to use all these features

Unlock the full potential of generative AI with Segmind. Create stunning visuals and innovative designs with total creative control. Take advantage of powerful development tools to automate processes and models, elevating your creative workflow.

Segmented Creation Workflow

Gain greater control by dividing the creative process into distinct steps, refining each phase.

Customized Output

Customize at various stages, from initial generation to final adjustments, ensuring tailored creative outputs.

Layering Different Models

Integrate and utilize multiple models simultaneously, producing complex and polished creative results.

Workflow APIs

Deploy Pixelflows as APIs quickly, without server setup, ensuring scalability and efficiency.

Flux.1 Dev

The Flux Dev model by Black Forest Labs is an advanced AI model designed for generating and transforming visual content from simple text inputs. Utilizing cutting-edge AI and machine learning techniques, Flux Dev provides users with the ability to create high-quality images effortlessly.

How to Use the Model

To use the Flux Dev model:

-

Input Text Prompt: Provide a textual description of the desired image. The model processes this input to generate a corresponding visual output.

-

Run the Model: Execute the model with your text input. The AI algorithm interprets the description to produce an image.

-

Review Outputs: Evaluate the generated images for quality and relevance to your input.

Use Cases

-

Graphic Design: Automate the creation of graphics based on simple text descriptions, saving time on repetitive design tasks.

-

Advertising: Generate visual content tailored to marketing campaigns, quickly producing assets that align with brand messages.

-

Content Creation: Assist writers and content creators in visualizing their narratives by generating illustrative images from textual descriptions.

-

Web Development: Enhance websites with unique, dynamically generated images that improve user engagement and aesthetic appeal.

-

Research and Development: Utilize the model for experimental purposes in AI research, testing the boundaries of text-to-image generation capabilities.

Other Popular Models

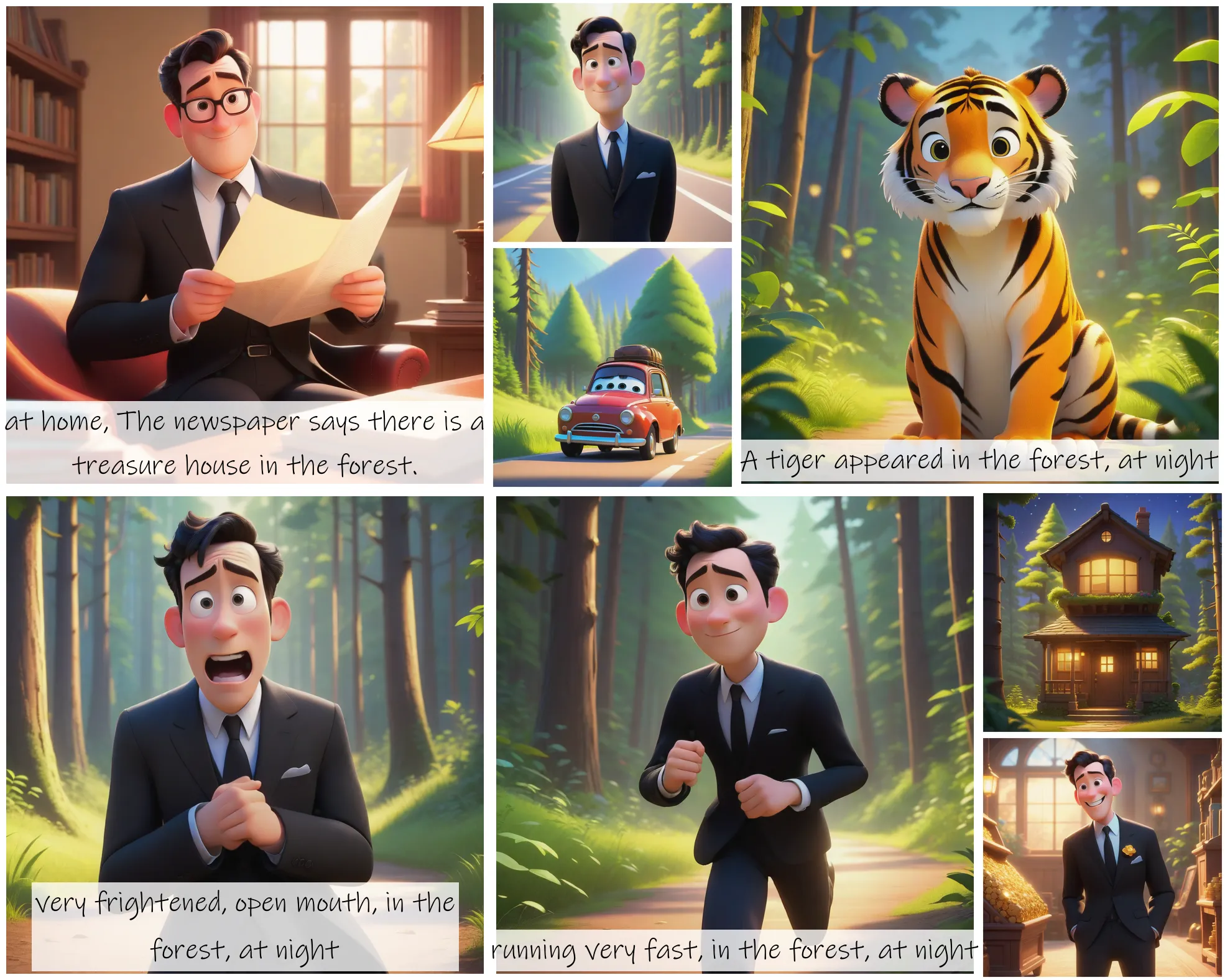

storydiffusion

Story Diffusion turns your written narratives into stunning image sequences.

faceswap-v2

Take a picture/gif and replace the face in it with a face of your choice. You only need one image of the desired face. No dataset, no training

sdxl-inpaint

This model is capable of generating photo-realistic images given any text input, with the extra capability of inpainting the pictures by using a mask

sd2.1-faceswapper

Take a picture/gif and replace the face in it with a face of your choice. You only need one image of the desired face. No dataset, no training